It seems like AI is everywhere these days. Half the posts I read on LinkedIn are touting amazing things people are doing with AI to power their missions and make their jobs easier. The other half are cautioning people about ethical, privacy, and environmental consequences of using AI.

I believe it’s imperative that we as a society figure out how to use AI ethically and for the greater good. As with many great leaps forward, agreement on standards and legal guidelines will lag. One thing seems certain, though: AI isn’t going away, so it behooves us to figure out how to use it and live with it right now.

As I was recently watching Star Trek: Strange New Worlds, it occurred to me that we can take some lessons about using AI from Star Trek through the years. Here are my takeaways for how nonprofits and our industry can think about AI.

1. AI is your tool, not your friend.

The computer on board starships and space stations is essentially a large database with a voice-activated interface. Captains and crew use the computer to complete routine tasks like setting a course, controlling the climate, and accessing data.

But the computer shouldn’t be allowed to run the whole ship, as Captain Kirk anticipated about the M-5 Multitronic System on the Enterprise.

What we don’t see captains doing is talking to the computer as if it’s a friend or asking it to write content for them. Even the Holodeck, the ultimate generative AI experience in the Star Trek universe, often serves as a cautionary tale. People who use holograms and the holodeck to escape their lives during deep space exploration inevitably suffer professional and personal consequences.

Captain Janeway lost touch with her priorities and ethics for a time during the extended holodeck simulation Fair Haven aboard Voyager.

Do’s and Don’ts for Nonprofits

- Do use AI for research, background information, and to perform simple tasks that can free up staff time. Verify the results with primary sources, especially if you’re researching something specific and detailed. AI isn’t always right, and it’s programmed to try to please you with answers–even if they’re not correct.

- Don’t use AI to write your fundraising letters or emails, or as an extra staff person. I don’t even subscribe to the “give me a first draft and I’ll correct it,” method, because I think it locks your brain into the format and content the AI came up with first. People can spot AI-written content, and it breeds contempt. We don’t want contempt for our missions.

Also, don’t talk to an AI as if it’s one of your staff members, especially for thorny decisions or with confidential information. Many AIs say they don’t use your data to train their larger models. But do you trust Big Tech Companies? I don’t. They’ve breached trust one too many times.

2. Rely on your team, not your AI.

Whenever a starship captain is on the horns of a dilemma, we hear a shipwide announcement: “Senior staff to the conference room!” Then we witness a spirited discussion about the situation, debate about what to do, and finally, a decision.

The Doctor on Voyager may be a sentient AI, and Seven of Nine is part Borg, but Captain Janeway was the one to negotiate a ceasefire with Species 8472.

What we never see is a captain abdicating their decision-making to a machine. They use computers to run simulations and predict probabilities, but the debate happens between life forms, and the ultimate decision-making is in the hands of the captain.

Do’s and Don’ts for Nonprofits

- Do make time to talk with your staff about problems, challenges, and decisions that need to be made. Do it in person if you’re in the office together, or a live video call if you’re all remote. Run a good meeting. Solicit input from a variety of people, both front-line staff and executives. Listen, synthesize, and then decide. Explain your decision to staff.

- Don’t bypass your people in the name of efficiency. Even if you think the answer to a problem seems obvious, and if AI has told you it’s the best thing to do, you will not build a great team or develop your staff into leaders by cutting them out of thought processes and decisions. Also, I repeat: AI isn’t always right. Also, it’s not always benevolent!

Both Peanut Hamper and Badgey on Lower Decks are examples of AIs who went rogue.

3. AI has great potential. It’s not all bad.

This Washington Post article about smart glasses (gift link) doesn’t make me want to wear them anytime soon, but I can see the potential. The most intriguing part of the article, to me, was how the glasses can provide real-time translation during conversation.

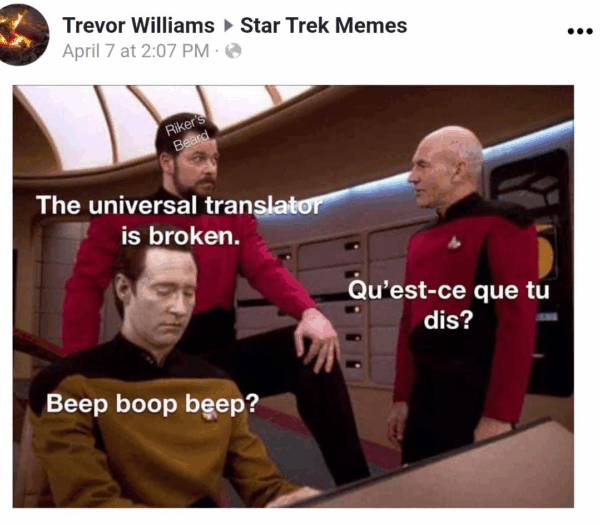

Of course, that reminded me of Star Trek’s Universal Translator. After struggling my way through a recent family vacation to Peru where my high school and college Spanish was not up to the task of colloquial conversation, I can see the value there. Even using Google translate on my phone caused delay and awkwardness, and didn’t always yield the correct word or phrase.

Important question: if Captain Picard is from France, why does he have an English accent?

For nonprofits, the ability to communicate directly with people regardless of what languages they have in common could help their work. And for people who need assistance, the ability to be understood can increase their connection to the organization.

Anything that helps us communicate more easily with each other, and dare I say, to understand each other, is a good thing in my book.

Do’s and Don’ts for Nonprofits

- Do pay attention to new AI-driven tools and apps. Stay current on what’s out there and evaluate whether it could help your work. Do thorough research into how a new program works and how it can fit into your tech stack. Make sure it complies with your organization’s data security and privacy standards.

- Don’t jump to be an early adopter of every new shiny AI program. Some are flashes in the pan, and may come with data privacy and security problems that we only find out about down the road. In general, nonprofits should make sure they are extremely good at the fundamental building blocks of executing their missions and raising money before adding cutting-edge technology that promises a big game but doesn’t always deliver. It’s often a way to spend millions of dollars and have nothing to show for it.

4. Human skills have always been important. They’re becoming even more important.

One thing we’ve learned during our careers in nonprofit tech is that tech problems are rarely JUST tech problems. There’s almost always a human problem, too: not enough training, unclear expectations, silent resistance, or unresolved conflict.

As this NPR segment explored, AI and automation are changing how some jobs are done. AI is good at some things, but not everything. Jobs in the future that require communication skills and creativity might become even more valued than they are today.

Any fan of Star Trek knows that the best captains are not just commanding officers or military leaders. In order to fulfill Starfleet’s mission of space exploration and seeking out new life, they have to also be the best diplomats. Starfleet captains are most often the people initiating First Contact (or Second Contact, shoutout to Lower Decks!), long before ambassadors can arrive on the scene. As such, they need to have excellent communication skills to take the lay of the land and defuse any potential rough patches.

Captain Freeman on Lower Decks eventually figured out that to resolve the Doopler problem, she needed to communicate clearly and directly. No AI could tell her that, and it’s her human skills that make her a great captain.

Similarly, as the people responsible for the smooth operations of the ship and its crew, the First Officers also need to have excellent people skills. Understanding human (and Vulcan, Illyrian, Borg, Ferengi, Andorian, Tellarite, Bolian, Klingon, Romulan, and on and on) behavior with all its various cultural influences, hidden traumas, and variables is something an AI just can’t do.

Nonprofit organizations have always needed good communication skills about their missions to persuade decision makers to support good policies, to persuade advocates to join the cause, and to persuade donors to give generously. AI might be able to calculate the right time to send someone an email, SMS, or an ad, but it can’t match human creativity and heart.

Do’s and Don’ts for Nonprofits

- Do develop your staff and encourage them to strengthen their people skills. Invest in training for conflict resolution, proactive communication skills, protocols around information sharing, and mentorship. Not every soft skill needs to be learned at a formal training. Good managers are constantly coaching their staff with specific, constructive feedback about how they handled a situation and inviting them to think about what they could have done differently.

- Don’t believe that every nonprofit job can or should be automated or handled with generative AI. It’s not true. Remember, AI is a tool, not a sentient being. Invest in your people, and invest in your tech, but don’t forget who’s who.

Star Trek has always been a beacon of hope and an earnest vision of a more peaceful, just, and cooperative future. Along with the visions of different people and different alien species working side by side in mutual harmony, Star Trek is also a touchstone for how we should be thinking about AI to fulfill our missions: whether it’s exploring strange new worlds, or helping people live better lives right here on this planet.

Raise HECK hopes we all will live long and prosper. We’d love to help you fulfill your 5-year mission. Get in touch at hello@raiseheck.com